4+ years shipping consumer products end-to-end -- from Chrome extensions to AI agent UX.

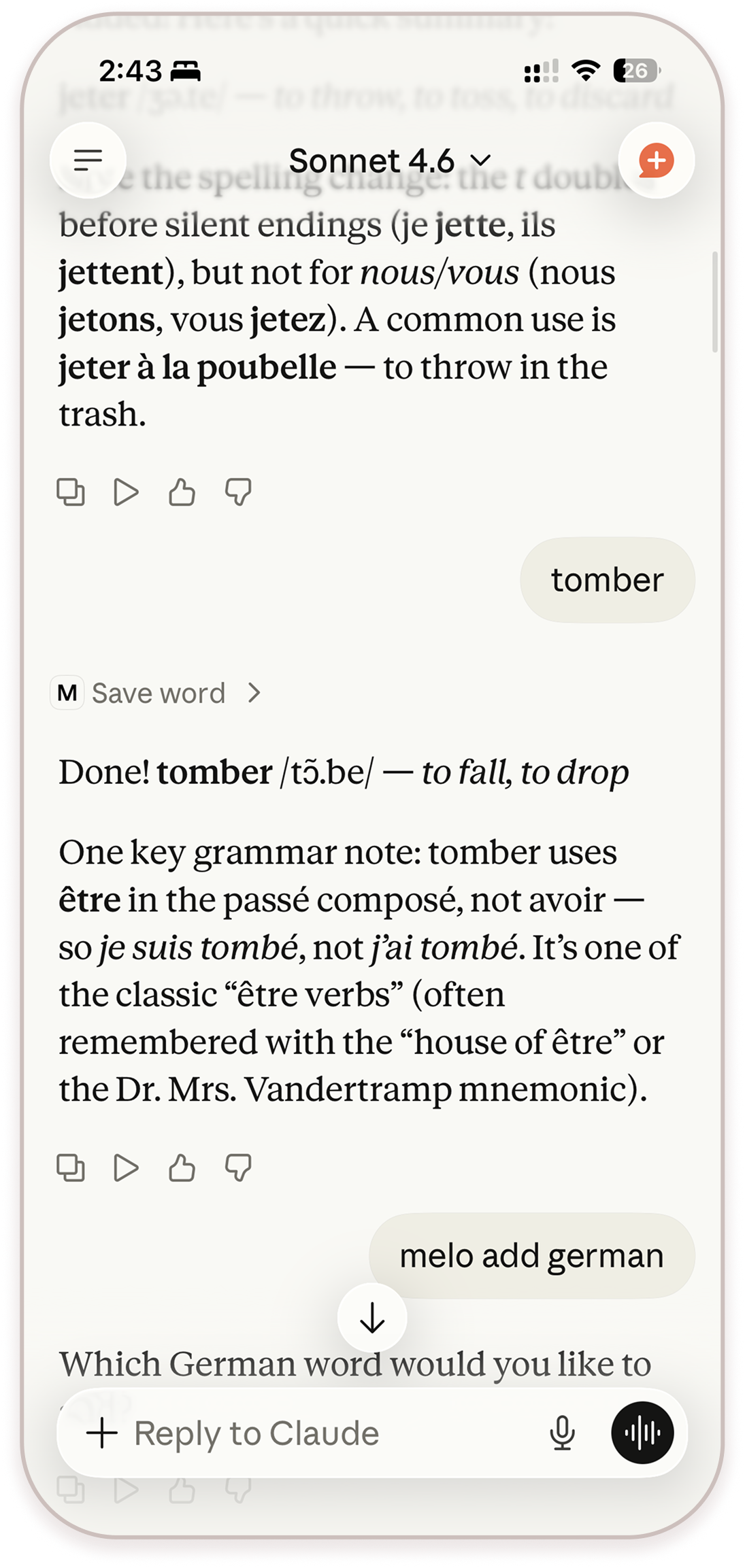

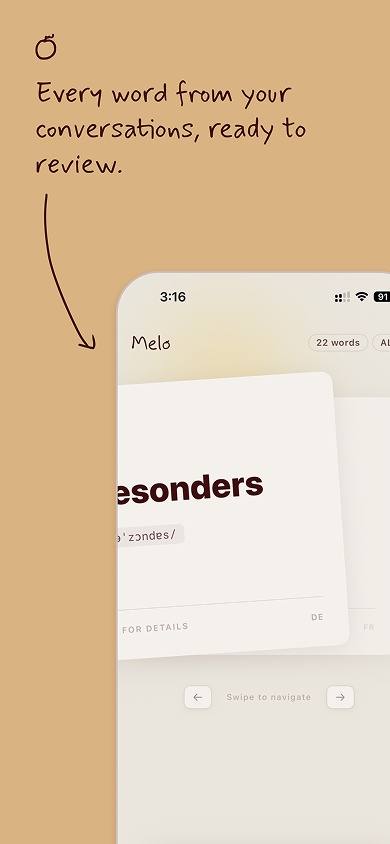

Currently shipping Melo -- a vocabulary tool that lives inside Claude.

I use Claude a lot. When I am reading something in French -- an article, a recipe, a film -- I ask Claude about words I do not know. Claude explains it well. I think: I should write that down. I do not. By tomorrow it is gone.

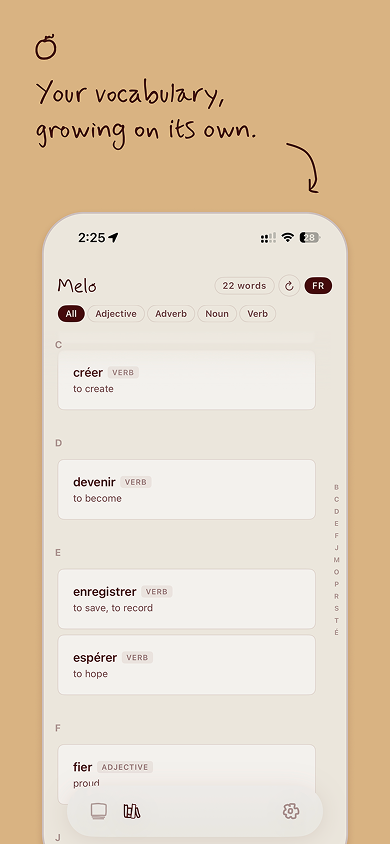

The problem is not that I lack a vocabulary app. I have tried them. Duolingo turns it into a game. Anki gives you a graveyard of flashcards for words you never actually cared about. Neither touches the two things that matter most in French: conjugation and grammatical gender.

The deeper problem: none of these apps know which words I actually encountered. They give you a list someone else decided was important. But the words worth learning are the ones that show up in your own life -- in the film you watched, the conversation you had, the thing you looked up at midnight.

The right place to capture a word is not a separate app you open. It is the moment you are already in -- the conversation with Claude. I was already asking Claude anyway. I just needed Claude to remember.

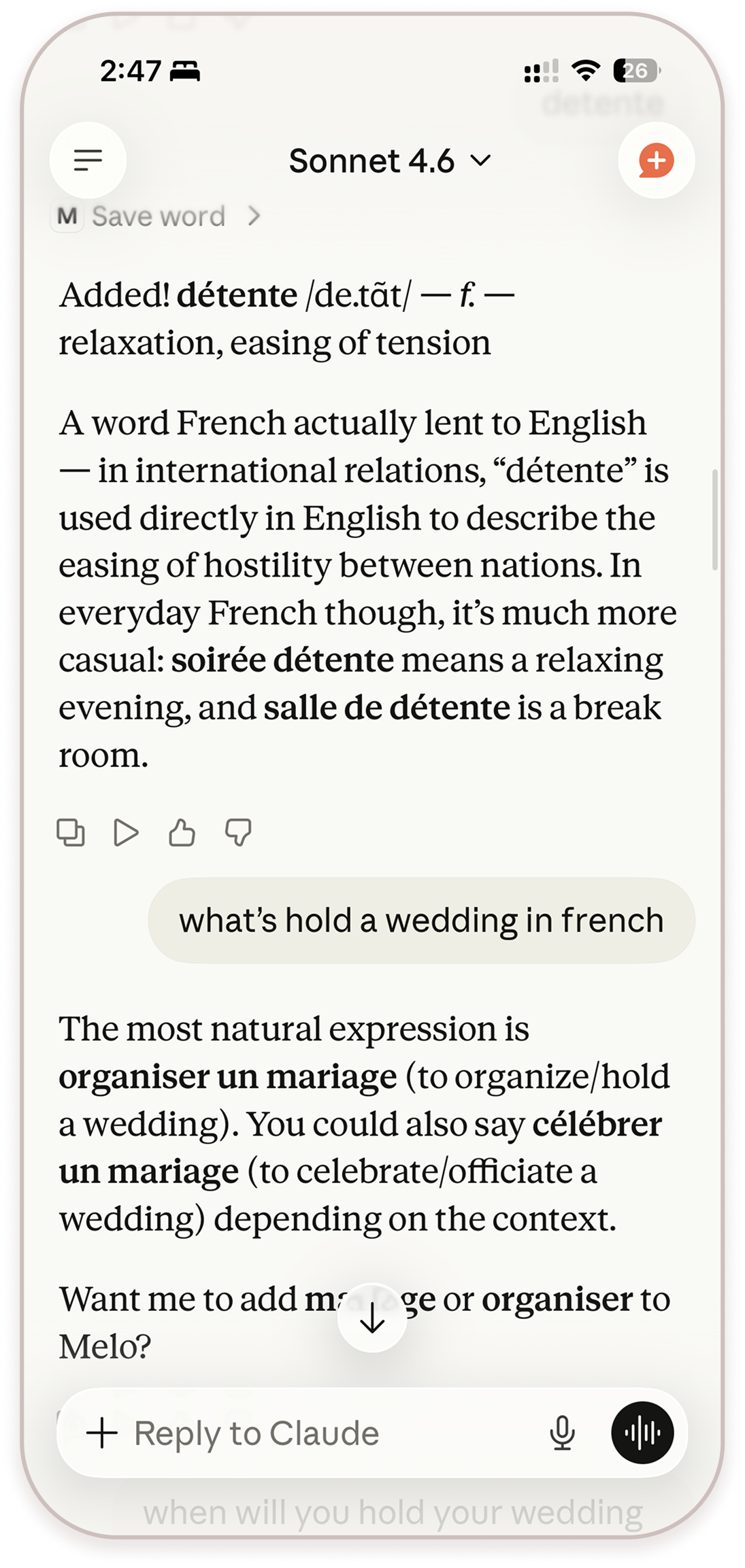

MCP made that possible. Connect Melo to Claude and it quietly saves every word you ask about -- the IPA pronunciation, an example sentence, the meaning, conjugation, grammatical gender -- automatically, in the background. No copy-pasting. No switching apps. The word shows up in Melo because you already asked about it. That is the whole interaction.

Built for people who watch French films, read Italian recipes, chat with an AI tutor at midnight.

Sign in with Apple, paste your MCP link into Claude's settings, and that is it.

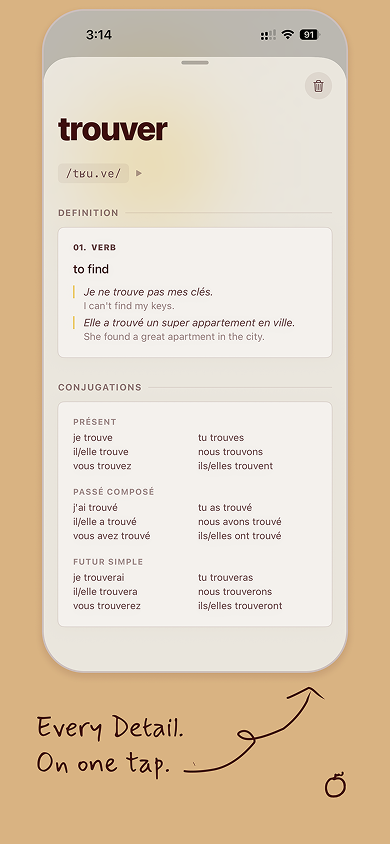

What is on a word card: Pronunciation, example sentence, conjugation table, grammatical gender. These four and nothing more. English translation is deprioritized -- the goal is to stay in the target language as long as possible.

Gender as color: Masculine and feminine nouns are color-tagged consistently. Gender becomes a visual fact you absorb over time rather than memorize consciously.

Flashcard design: The card tests conjugation, not definition. Most apps ask "what does this mean?" -- Melo asks "how do you use it?" A deliberate departure from the Anki model.

No word lists: Melo does not give you a curated list to work through. It only shows you words you actually encountered. The words worth learning are the ones that already showed up in your life.

The sync UX: The hardest problem was making the Claude-to-Melo connection feel invisible rather than bolted on. The word should feel like it was always there.

Melo is live on the App Store and in submission to Claude's Connector Directory. Download on the App Store, connect to Claude, and ask about any word in French, Spanish, Italian, or Japanese.

Most product design assumes the user is the one taking action. The interface is a surface for human decisions. What happens when the AI is the actor?

At an AI startup in 2025, I worked on two products that put this question at the center.

Transparency vs. noise: Showing every AI decision creates log fatigue. Hiding decisions creates distrust.

Control vs. automation: Every intervention UI you add undermines the value of the AI. The upfront configs -- the prompt, the constraints, the operation styles -- need to be expansive and deliberate. Once that step feels owned, the runtime feels earned rather than imposed.

Trust signals: Whether it is a smart contract or any other constraint -- the real safety harness is invisible because users cannot see code. The design challenge is making a technical guarantee feel human: legible limits, not reassuring copy.

The concept: an AI that could safely manage investment positions on your behalf. Hard constraints enforced by smart contracts -- not just UI affordances -- meant the AI could not spend more than you had configured. A genuine architectural safety guarantee, not a design-layer promise.

The interface looked like a messaging app. Deliberately. If you are going to have an AI managing your money, natural language is still the most flexible way to express what you want -- "take profit if we're up 20%" is clearer than any form UI. I used in-chat interactable widgets for quick actions that needed precision: confirm a transaction, check a live market chart, review a position. The chat handled intent; the widgets handled execution.

Recreated in lo-fi to illustrate the interaction model -- original product under NDA.

Think of a fund platform where instead of a human portfolio manager running each fund, an AI agent runs it. Users could initiate a vault with a prompt -- an investment style, a risk threshold, a market thesis -- and the agent would allocate and rebalance 24/7 within those parameters.

Early tests showed something genuinely useful: the agent could detect abnormal market signals and exit positions safely ahead of volatility -- something a human manager sleeping in a different timezone simply cannot do. Early performance data showed returns comparable to or higher than equivalent human-managed funds -- at a fraction of the management cost. And because the constraints were enforced at the contract level, it was structurally more secure than a human-managed equivalent.

Where traditional fund platforms ask you to configure parameters -- risk tolerance, allocation limits, rebalancing rules -- this one asks you to describe your strategy in plain language. The AI reads intent and extracts structure, surfacing its confidence on each parameter before you commit.

The confirm step is not about filling in fields. It is about verifying that the AI understood you correctly.

I art directed the video for Product 01. It was live action, post-futurist western. The setting sits somewhere between frontier town and near-future city. Characters with 80s silhouettes -- leather, volume, a little theatrical -- inside a world that is clean, architectural, slightly cold. The tension between those two aesthetics was intentional: familiar human warmth inside an AI-managed system.

The Product 02 video was motion graphics -- I designed the product and then built the video myself in After Effects and Cavalry. The systematic visual language was a deliberate extension of the product's logic: where the first video leaned into character, this one leaned into structure.

The runtime view. Live positions and P&L alongside the agent's full decision log -- every move plotted on the chart, every rationale one click away. The design goal was not to hide what the AI did. It was to make the AI's reasoning as navigable as the outcome.

In October 2021 I co-founded a startup around a simple but stubborn idea: platforms should not own your information. Your content, your identity, your audience -- these should belong to you, portable across any app, visible to whoever you choose. Not because of ideology, but because the current model is structurally broken. A platform can delete your account and everything you built disappears with it.

The thesis was open information as infrastructure -- a layer beneath applications where your data lives independently of any single platform. We spent three years trying to find the right product shape for that idea, moving from a browser extension to a social identity layer to a search engine. Each product taught us something the previous one could not.

A browser extension that surfaced your online social identity contextually as you browsed. The core product decision was frictionless onboarding through claiming -- you already had content scattered across platforms. The extension let you link existing posts and domains to a portable identity file you owned, without creating anything new or changing how you posted. Content synced automatically in the background.

The zero-friction insight: nothing moves, nothing migrates. The file just starts pointing at what you already made.

60k users in approximately 5 months.

A social identity layer that connected on-chain assets with human-readable profiles. The problem was one profile, wildly different content types -- NFTs, donations, game scores, written posts. Each had a different shape.

The key interaction was the showcase management model. Inspired by iOS homescreen folders: content is clustered by type but drag-to-list and drag-to-hide let users curate exactly what appears on their profile. Listed items surface on the Vitrine. Everything else stays unlisted but accessible.

320k website visitors -- full design system built from scratch.

A pivot toward open information as a browsing and search experience. If your

identity and content should be portable and platform-independent, then so should

the way you discover information. We shipped a web app, then iOS and Android,

then a search engine format -- each iteration trying to find the right container

for the same underlying idea. Deprecated Feb 2024.

iOS + Android shipped -- full mobile design system.

One of the larger design investments was the action icon system -- over 30 icons covering every on-chain and social action type the feed could surface. Each icon uses a combination of shape and background color to encode meaning across two channels, so actions are scannable before reading any text. Shape families are grouped by category: social actions share one visual language, financial actions another, governance a third. The redundancy was intentional -- color alone fails in low-contrast environments, shape alone fails at small sizes. Together they hold.

| Txn Hash | Method | Age | From | Value |

|---|---|---|---|---|

0x7f3a...e2c1 | Transfer | 2 hrs | 0x9b2d...44fa | 0 ETH |

0x21d8...9b3e | Mint | 1 day | 0xf4a1...c77d | 0.08 ETH |

0x88bc...3d9f | swapExact | 3 days | 0x3e7f...a12b | 0.5 ETH |

0xf4a1...c77d | castVote | 2 days | 0x88bc...3d9f | 0 ETH |

#0072FF

#2C2C2E

#79B346

#EAC028

#ED675E

#F0F0F4

medium · 8px

large · 12px

base unit: 4px

gap: 8 / 12 / 16px

large · 0 8 64 0px

opacity 6–10%

Kill the form, keep the thesis. Every pivot killed a product but kept the idea. The design work was not wasted -- it taught us what shape the idea needed to be.

Speed is a design constraint. At a small founding team pace I had to design systems that could be built fast and iterated without full rewrites. This made me ruthless about component decisions.

60k users is a signal, not a destination. The identity extension's growth was fast and organic -- but it happened in a specific market moment that did not last. Learning to read that signal early -- and move before the trough -- was the most valuable thing I built in that period. More valuable than any of the products.

Directed a full brand relaunch timed as a pre-heat for mainnet deployment. The brief: move from early-startup visual language to something that could represent a protocol -- durable, systemic, trustworthy, but not corporate. Deliverables: visual system, typography, color, motion language, and launch campaign.

Organized and art-directed three open-house events across two years -- founder/CEO panels, fireside chats, and product sessions.

I also designed a custom NFC wristband for the events. Tap it to another attendee's phone and it pulls up their social profiles from a companion web app. Attendees could give each other a small endorsement, which pushed them up a live leaderboard. A physical expression of the open identity thesis -- and something people actually played with.

Denver (2023) -- 3-day open house. 1,200+ attendees.

Singapore (2024) -- Google APAC HQ, one-day panels. 1,500+ attendees.

Bangkok (2024) -- Google Bangkok, one-day panels + launch party. 2,000+ attendees.

Creative directed a short animated series explaining the concept of open information. Worked with an animator from script to final delivery. The brief was to avoid every aesthetic trap of "blockchain explainer" videos -- no floating coins, no buzzword soup. Closer to a Kurzgesagt-style educational piece: clear concepts, deliberate pacing, visual metaphors that do actual explanatory work.

Every city has wind. Wind has speed, direction, pressure, humidity. None of that is music -- but all of it is data with shape and rhythm.

Windspell asks: what if a city's live weather became a generative instrument? Not a visualization, not a graph. A sound you could actually sit with.

Type a city. The app looks up its coordinates, derives a musical scale from the cultural region, and hashes the city name to a root note. Then it starts polling the weather API.

The visual center of the app is a ring of 72 tick marks -- a physical kalimba model. Nine of those ticks are active tines, each tuned to a scale degree. The ring rotates continuously. Wind speed controls how fast it spins. A needle sits fixed at the live wind direction. When a tine crosses the needle, it gets plucked.

Wind speed is tempo. Wind direction is which note plays. That is the whole mechanic.

14 musical scales are mapped to geographic regions by latitude and longitude bounding boxes -- Aeolian for Nordic latitudes, Hijaz for the Middle East, Yo for Japan, Blues for the American South, Slendro for Southeast Asia. The root note comes from a djb2 hash of the city name modulo 12 chromatic semitones above C3.

Pressure, humidity, and gust data then modulate the audio continuously. High pressure brightens the filter. High humidity stretches the reverb tail. Gusty wind adds harmonic overtone content. Wind direction shifts the stereo pan field.

Chord voicing follows the region's timbre. Cold regions (Arctic, Nordic, Andean) voice an open 4th plus a sparse 6th, with long reverb. Warm regions (European, Mediterranean, Blues) voice a full triad. Bright regions (African, Japanese, Oceanic) use a clean open fifth only.

The result: Cairo and Reykjavik do not sound alike. They use different scales, different root notes, different waveforms, different reverb lengths. The same city sounds different at dawn versus midnight -- the background shader uses a real solar position algorithm, so the lighting shifts with actual sun position.

DATA

ROTATION

PLUCK

NOTE

AUDIO

| PARAMETER | RANGE | AUDIO EFFECT | FORMULA |

|---|---|---|---|

| PRESSURE | 960 → 1040 hPa |

Filter brightness Low pressure → murky, dense High pressure → bright, open |

cutoff × (0.75 + Δp × 0.5) |

| HUMIDITY | 0 → 100% |

Reverb wet mix + tail length Desert → short, direct Tropical → long, diffuse |

wet = 0.20 + h × 0.35 tail × (0.6 + h × 0.8) |

| GUSTS | 0 → 2× wind speed |

Harmonic overtone content Turbulent air → richer texture 2nd oscillator at 2× freq |

g = (gusts−spd)/spd harmonic += g × 0.20 |

| WIND DIR | 0° → 360° |

Stereo pan offset N wind → field shifts left S wind → field shifts right |

pan += sin(dir_rad) × 0.15 |

Long reverb (2.4–3.5s).

Mid reverb (1.1–2.0s).

Short reverb (0.4–0.7s).

The resonator form was not the obvious first choice. Five directions were explored -- a breathing circle, concentric orrery rings, radial petals, a Lissajous curve, and soft diffused arcs. The concentric ring model survived because it was the only one that directly modeled the physical mechanics: a ring sweeping tines past a fixed needle is exactly how a kalimba works. The visual and the audio became one system.

Webcam and cloth simulation were scoped early and removed. A fully procedural GLSL shader -- driven by cultural region hue, live weather uniforms, and solar position -- is faster, more reliable, and creates a more distinct visual identity than a background feed.

WINDSPELL // RESONATOR DESIGN OPTIONS

Three.js for the fullscreen GLSL shader. GSAP for the Resonator ring animation and tine bend physics. Web Audio API for the synthesis chain -- oscillators, bandpass noise, convolver reverb, stereo panning, biquad filter. OpenWeatherMap for live wind data, polled every 60 seconds.

The audio chain runs a continuous drone at half the root frequency and bandpass-filtered wind noise that scales with wind speed. Per-pluck notes layer on top: the main oscillator, a harmony note from the chord voicing, and an overtone oscillator that scales with gustiness. Everything routes through a master gain with a wet/dry reverb split that rebuilds when humidity shifts significantly.

Token costs are invisible. You send a message, something happens, compute is consumed -- and you never feel it. The abstraction is total. For most users this is fine. But for people building on top of LLMs, or just thinking carefully about their AI usage, the invisibility creates a strange disconnect.

Paguro is a small terminal app and macOS widget that converts your token consumption into an in-world currency -- "pp" (paguro points). Spend tokens, earn pp. Use pp to feed your creature, buy it a new shell, keep it alive. Abstract API costs become something you can see, feel, and even care about a little.

Most AI product design is about removing friction and hiding cost. Paguro goes the other direction -- it makes cost visible, but translates it into something playful rather than stressful. The design question is: how much visibility is useful before it becomes anxiety-inducing? Where is the line between awareness and guilt?

There is also something worth exploring in the creature format specifically. A tamagotchi-style companion that depends on your AI usage creates a relationship with your tooling that is genuinely different from a dashboard or a usage meter. It is not extractive -- you are not being warned or penalized. You are just... tending something.

Very early. The concept is clear, the mechanic is defined, early prototyping is underway. No public release yet. Included here because the design thinking is real even if the product is not finished.

I think the most interesting design problem right now is the one nobody has a pattern for yet: what does an interface feel like when the AI is the actor?

Not "here is a chatbox." Not "here is an AI feature inside your existing product." But: the AI just did something on your behalf. It made a call. Now what? How do you design for trust, for legibility, for the user's sense of agency -- when the decision was not theirs?

I have been living inside that question at a startup where AI agents managed real money on behalf of users. No established patterns. You figure it out or the product fails.

I have a stats background (UMich) and a data visualization degree (Parsons). I code what I design. I built Melo -- a vocabulary tool on the App Store that connects to Claude -- because I was learning French and nothing handled conjugation and gender well. That is roughly how I work: see the problem, build the thing.

- Founding designer across 3 consumer products end-to-end: Chrome ext -- web app -- iOS + Android

- 60k users in 5mo (identity ext.) -- 320k website visitors -- full design system ownership

- Designed NFC networking hardware + companion web app for 3 events across Denver, Singapore, Bangkok (4.7k+ attendees)

- Creative directed brand relaunch + animated explainer series; art directed launch video (AE + Cavalry)

- 2025 spin-out: product design lead for AI agent investment platform and iOS trading app

Design: Figma -- design systems -- interaction design -- motion direction -- motion graphics (AE, Cavalry) -- brand identity

Build: React -- HTML/CSS/JS -- Swift -- MCP server development -- Blender

AI: Claude API -- MCP integration -- AI agent UX

Other: developer relations -- event production -- creative direction

Languages: Mandarin -- intermediate French & German -- conversational Japanese